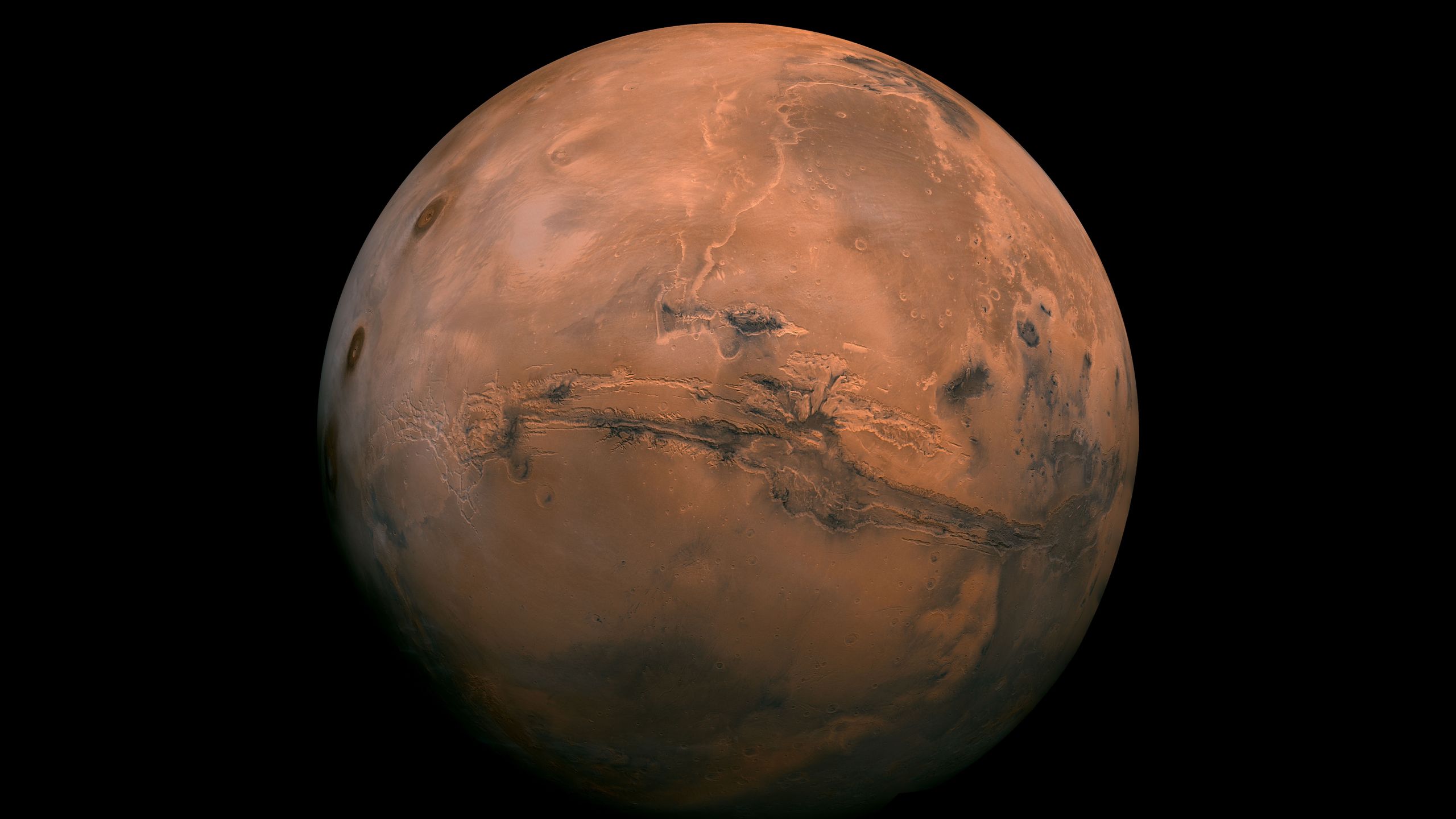

A remarkably hardy bacterium can survive pressures similar to those generated when asteroid impacts blast debris off Mars, a new study has found, suggesting that microbes could endure interplanetary journeys and potentially seed life on other…

Author: admin

-

US demand for unconditional surrender ‘dream they should to take to their grave’: Iranian President – The Australian

- US demand for unconditional surrender ‘dream they should to take to their grave’: Iranian President The Australian

- Tehran pounded in week two of US-Israel war, Iran targets Israel Al Jazeera

- Day 7 of Middle East conflict — Trump says no…

Continue Reading

-

Apple M5 Max Beats Desktop M3 Ultra in Benchmarks – Gadget Hacks

- Apple M5 Max Beats Desktop M3 Ultra in Benchmarks Gadget Hacks

- Apple introduces the new MacBook Air with M5 Apple

- Apple’s New Laptop Fixes the One Flaw That’s Been Secretly Throttling Your External DAC Setup Headphonesty

- Hear that? That’s the…

Continue Reading

-

Trump tells CNN he’s not worried whether Iran becomes a democratic state

President Donald Trump told CNN Friday that Iran’s leadership has been “neutered” and that he’s looking for new leadership that will treat the United States and Israel well, even if that’s a religious…

Continue Reading

-

ZTE Reports 2025 Revenue of RMB 133.90 Billion, Advancing Full-Stack AI Capabilities – ZTE

- ZTE Reports 2025 Revenue of RMB 133.90 Billion, Advancing Full-Stack AI Capabilities ZTE

- ZTE Board Clears 2025 Reports and Seeks Mandate for RMB8 Billion Bond Issue TipRanks

- ZTE Posts Audited 2025 Results and Proposes Cash Dividend TipRanks

- ZTE Proposes Final 2025 Cash Dividend, Key Details Pending TipRanks

Continue Reading

-

No. 14 Kansas to Host Kansas State in Dillons Sunflower Showdown Saturday

LAWRENCE, Kan. – No. 14 Kansas (21-9, 11-6) concludes its regular season by playing host to Kansas State (12-18. 3-14) in the Dillons Sunflower Showdown on Saturday, March 6. Tip from Allen Fieldhouse is set for 1 p.m. CT and the game will be…Continue Reading

-

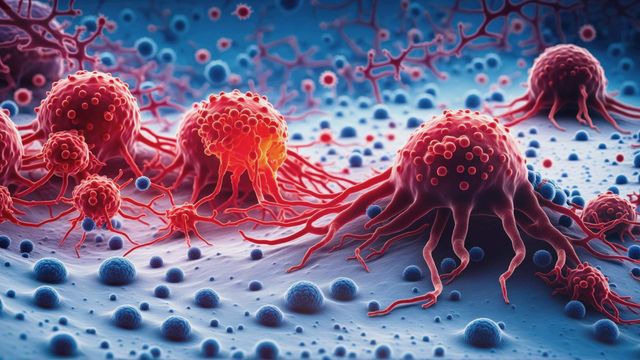

New Study Finds Shared Weakness in Brain Tumors

Research uncovering the origin of pineoblastoma, a rare pediatric brain tumor, has also revealed a dependency across multiple brain tumor types that share a similar molecular program. Scientists at St. Jude Children’s Research Hospital,…

Continue Reading

-

Leslie Odom Jr, Broadway’s original Aaron Burr, to join London cast of Hamilton | Theatre

Leslie Odom Jr, who originated the role of Aaron Burr in Hamilton on Broadway, is to return to the hit musical – this time making his West End debut.

The actor will join the London cast at the Victoria Palace theatre for nine weeks this summer….

Continue Reading

-

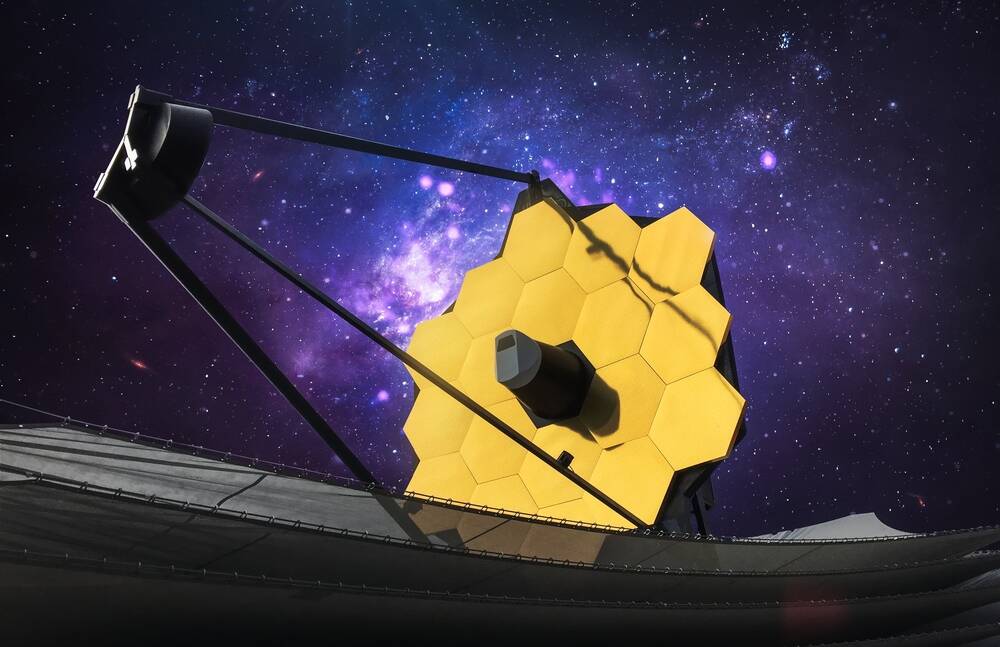

Scientist rule out a 2032 lunar impact for asteroid 2024 YR4 • The Register

Scientists have ruled out the possibility that the near-Earth asteroid 2024 YR4 might hit the Moon on December 22, 2032.

Previous analyses had given the event a 4.3 percent chance, but refinements to measurements of its orbit by the European…

Continue Reading

-

Trump demands Iran’s ‘unconditional surrender’ as bombs pound Tehran and Beirut | US-Israel war on Iran

Donald Trump has said only Iran’s “unconditional surrender” will bring an end to the offensive launched seven days ago, as the US and Israel carried out some of the heaviest bombardments so far in the conflict.

“There will be no deal with…

Continue Reading